Most AI image generators have a “tell.” The faces are too polished, the colors drift from what you asked for, and any text longer than two words becomes abstract art. Wan2.7-Image goes after all three problems at once — and, at least from what we’ve tested so far, the results are genuinely impressive.

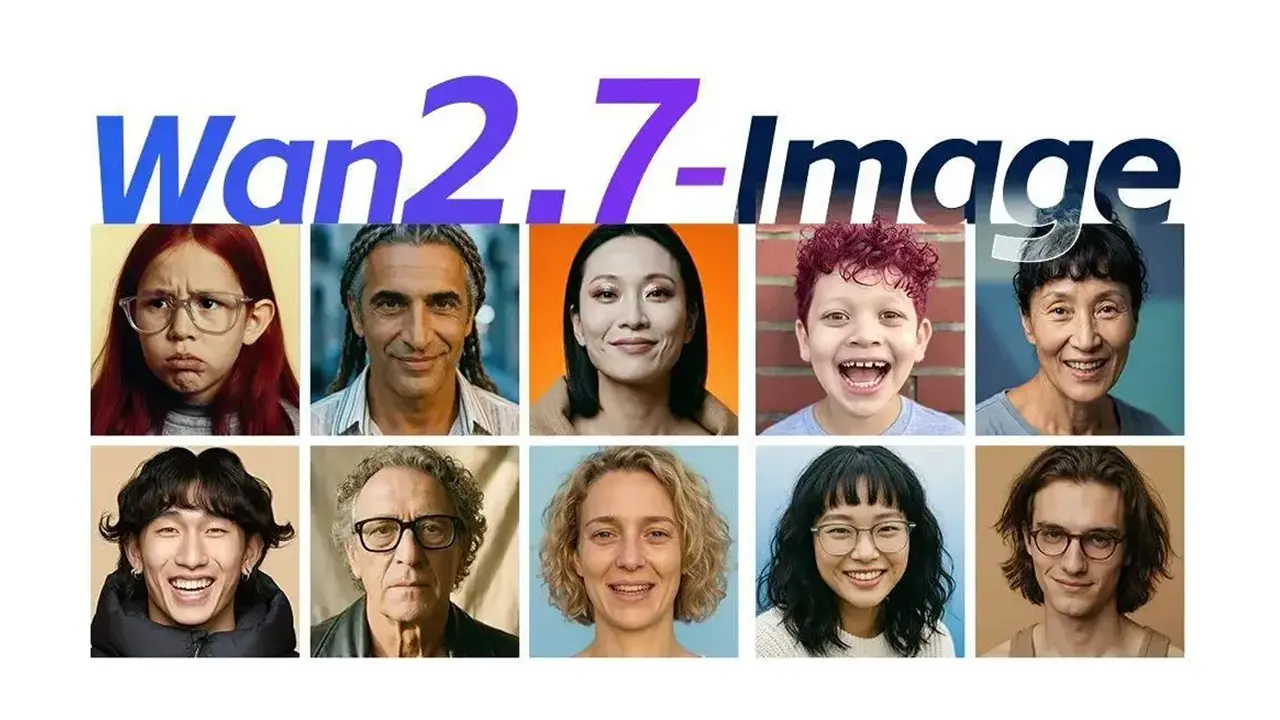

Faces That Don’t All Look Like the Same Person

The biggest visual giveaway of AI-generated portraits is sameness. Swap the hair and outfit, and you’d swear every face came from the same mold. Wan2.7-Image introduces granular face sculpting directly in the prompt: you can specify bone structure, face shape (round, square, oblong), eye style (narrow, deep-set, wide), and other subtle features.

The result isn’t just “different” — it’s believable. Faces look like they belong to actual, specific individuals rather than a blended average. For anyone producing character art, storyboards, or brand personas, this is a significant step toward usable output without manual retouching.

Wan2.7-Image Color Palette: Precision Designers Actually Need

If you’ve ever tried to match a brand’s exact color scheme in an AI-generated image, you know the pain: the model interprets “navy blue” however it feels like, and the output rarely aligns with your brand guide.

Wan2.7-Image ships with a palette extraction feature. Drop in a reference image — a mood board, a painting, a screenshot from your design system — and the model extracts the color distribution and applies it to the generated output. You keep your composition and subject; the palette shifts to match the reference.

For designers and marketing teams working under strict brand guidelines, this removes an entire round of post-production color correction. We tested it with a Pantone swatch card and a Van Gogh painting — both transfers were remarkably faithful.

Wan2.7-Image Text Rendering: 3K Tokens, Full Pages

Text rendering has been the Achilles’ heel of image generation. Most models can handle a short headline, maybe. Ask for a paragraph, and you get a beautiful image filled with beautifully meaningless squiggles.

Wan2.7-Image pushes the boundary to 3,000 tokens — enough to fill an entire A4 page. We’re talking about generating images of research papers, data tables, math formulas, and dense infographics where every character is legible and correctly placed. The model supports 12 languages including English, Chinese, Japanese, Korean, and major European languages.

We threw a full-page product spec sheet at it — fine print, bullet lists, footnotes — and the output was print-ready. That’s a first for us.

Point-and-Edit: Interactive Editing Done Right

Generation is only half the story. Wan2.7-Image natively supports interactive editing: select a region, and you can add, replace, move, or realign elements within it — all without leaving the model or exporting to a separate tool.

This works the way you’d expect a layer-based editor to feel, except there are no layers — just natural-language instructions applied to a bounding box. “Move the coffee cup to the left.” “Replace the background with an outdoor café.” It’s fast, and the edits blend seamlessly with the rest of the image.

Multi-Subject Consistency Across Up to 9 References

Generating a single great image is one thing. Keeping a character, product, or style consistent across a series of images is where most models fall apart.

Wan2.7-Image supports up to 9 reference images for subject consistency. Feed it your hero character from multiple angles, and it will maintain identity across new scenes, poses, and compositions. This is huge for:

- E-commerce product photography (same model, different outfits/settings)

- Storyboard and comic generation (same characters, different panels)

- Brand campaigns (consistent visual identity across assets)

Batch Generation: Up to 12 Images at Once

Need a full set of visuals in one go? Wan2.7-Image can generate up to 12 images per batch while maintaining stylistic coherence. Think PPT decks, social media kits, multi-angle product views, or a complete storyboard — all from a single session.

We used Wan 2.7 Image to generate an 8-image product lifestyle series and a 6-panel comic strip. The consistency held up across every frame.

Under the Hood: Why It Works Better

The technical backbone is what Alibaba calls a unified generation-and-understanding architecture. Instead of treating image generation and image comprehension as separate tasks, Wan2.7-Image maps text and visual semantics into a shared latent space. The model doesn’t have to “guess” what your words mean — text and image are tightly coupled from the start.

Training also incorporates multimodal instructions (text + image inputs together), pushing the model beyond surface-level pixel fitting into what feels like genuine semantic reasoning about layout, composition, and content.

We’ve integrated Wan2.7-Image into A2E so you can test it immediately — face sculpting, palette transfer, long-form text rendering, and all. Drop in a detailed prompt and see for yourself how far image generation has come.